“productive discomfort”

In case you missed it last week, sneakerweb is our new project where users exchange and collect websites via physical media like USB sticks. This website data is stored locally (with Willow) and made accessible via a local web server.

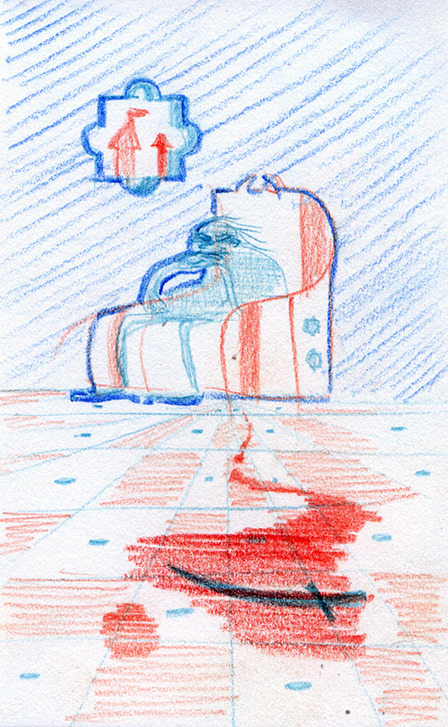

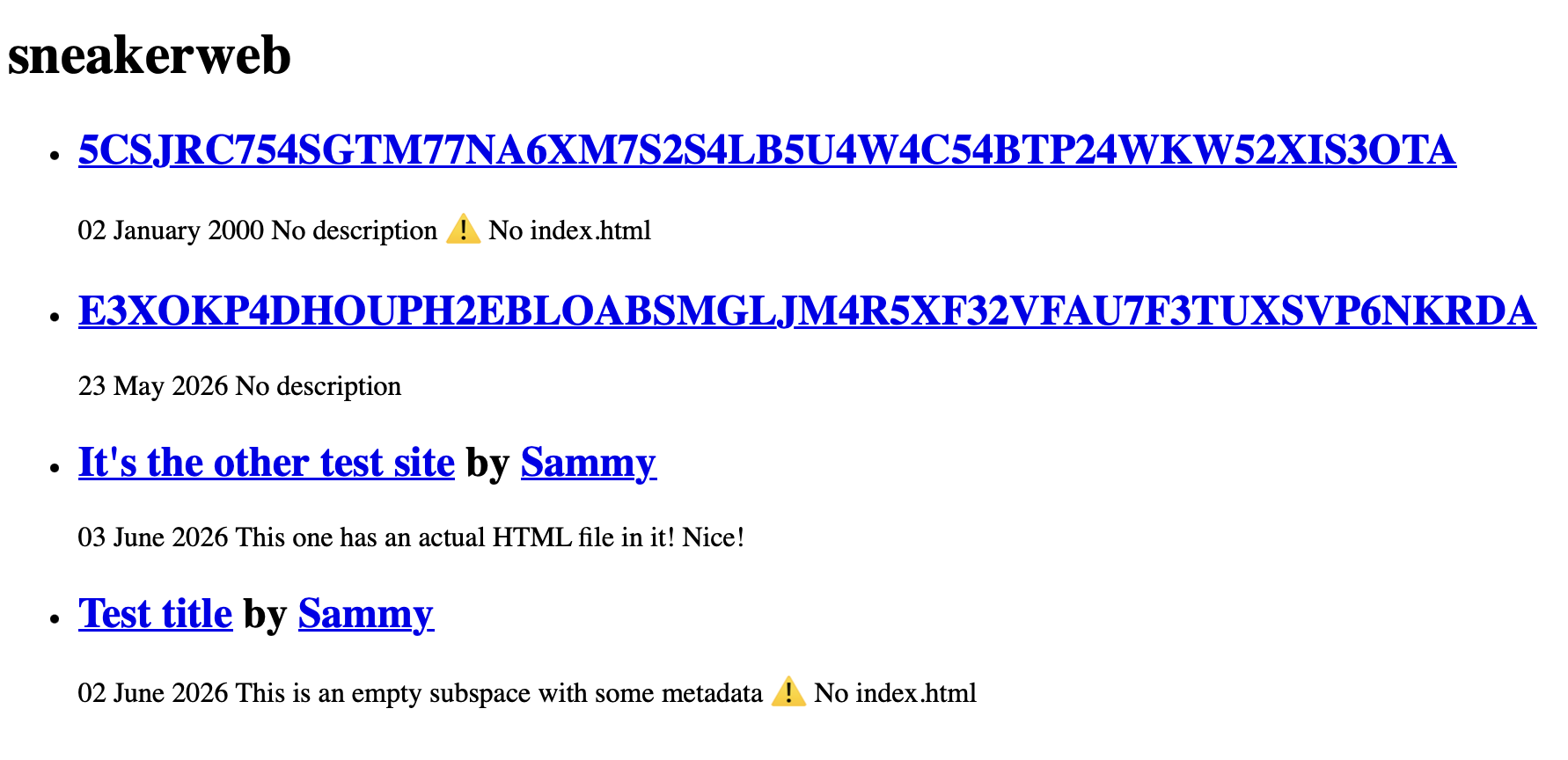

One of the nice things about having website data on disk is that you can build a complete overview of everything you’ve collected over time. So I’ve been designing and building a ‘front page’ to the sneakerweb which you’ll see if you browse to sneakerweb.localhost.

But what should this front page look like? We really want to do better than a base32 encoded public keys. I started looking into the designs of search engines of yore, and of Gemini gemlogs (which have a very elegant, clear presentation). We’re not at the point where we’re going to crawl sneakerweb site contents, so maybe authors could provide some metadata for us?

A .sneakerweb file could provide some details like a title, description, author, and we know when the site was last updated through Willow. And then we can use that data to build something that looks a bit like an old search engine results page.

Sadly it evokes the spirit of dullness. It requires a culture of self-volunteering bureaucracy, and agreeing with our taxonomy of websites (e.g. does a site always have a single author? A title? A description?).

Maybe search engine results are a dead end, and instead I should be looking at webrings. Friend of the site Miaourt linked me to Nekoweb, a hosting service where sites are presented as tiles in a grid, and the owners seem to have a lot of latitude in how their tile is presented. Some of them follow a fixed format, and some break out of it completely.

I love giving space to arbitrariness, so I am going to rip out the metadata stuff I’ve been working on and try to give way to authors’ ideas of how their site should present itself. I’m not sure yet if this will be as simple as an image file, or a tiny iframe pointed at some little sneakerweb.html page.

~sammy

Ourcana, or Data Rippling Out

Our first interview on worm-blossom.org! We chat with Sarah Aronson, aka Heni, about her app Ourcana, and its underlying Willow implementation... in Dart! And... 10,000 soon-to-be Willow users!!!

Sammy: Sarah! I guess the best place to start would be by introducing yourself.

Sarah: Hi, I’m Sarah Aronson. I’ve been programming since high school when I worked on FIRST Robotics Competition robots. High schoolers program robots that fight each other. Professionally, I’ve worked in places like the John Hopkins Applied Physics Lab with a modular prosthetic limb. I was on the Federal Railroad Administration doing graph theory for them in a pretty practical and applied way. I worked for a trucking company as their machine learning archictect, before AI really meant Gen AI.

Sarah: And in the end, I decided I wanted to work on an app for myself, and that’s what I’ve been doing for the past year or so. I want to write something that’s going to benefit me, going to benefit others, help others. And eventually, this space for the app, Ourcana, just sort of opened up.

Sammy: Naturally leading to the question of “what’s Ourcana”?

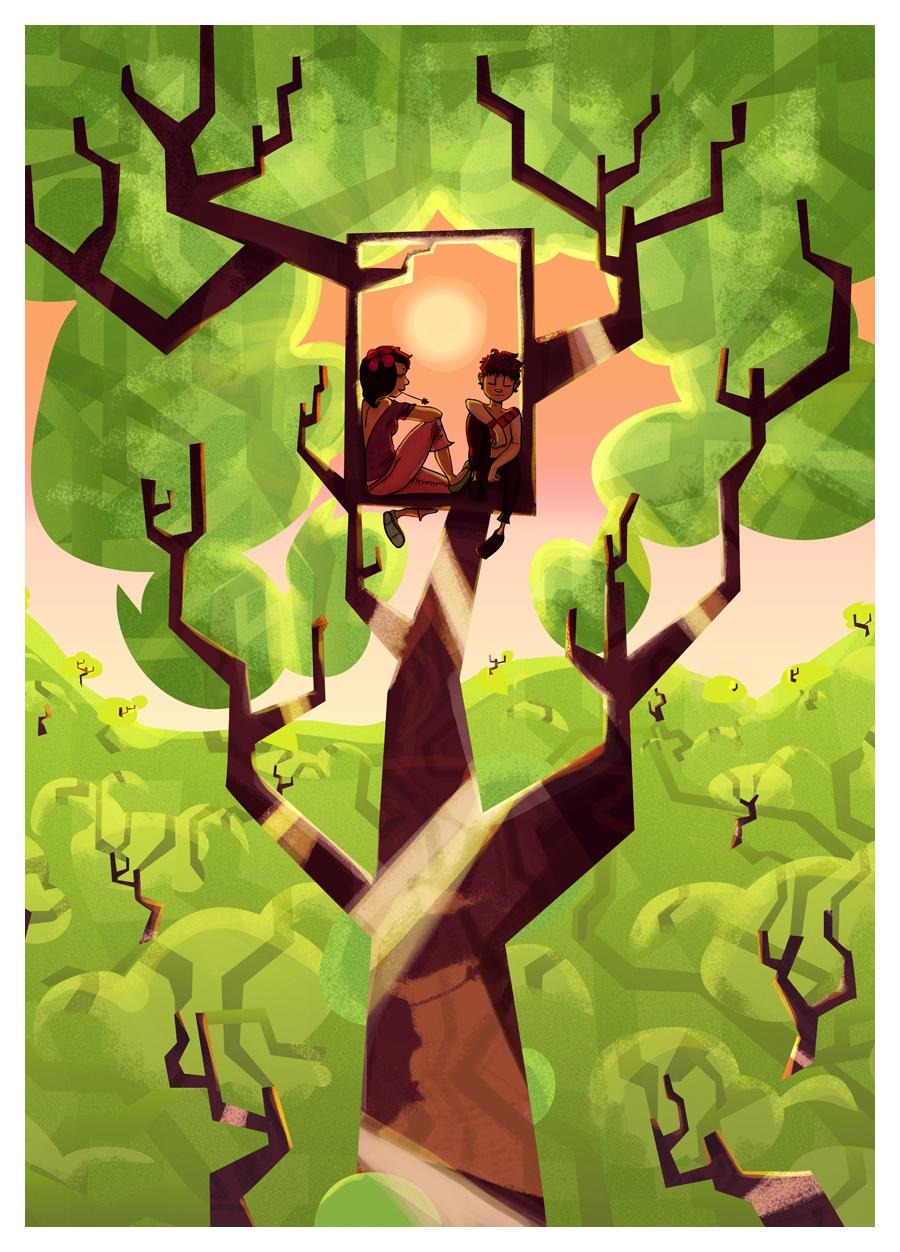

Sarah: To give some context, there was an app called Simply Plural. The plural community is a community that identifies with the experience of what is sometimes referred to as Disassociative Identity Disorder, or the experience of having different personality states. A lot of people who are adults naturally have this. You have a work personality, a friend personality.

Sarah: But for individuals who are plural, these are a lot more concrete. They like to have applications that give them logs. So I can have a log on Ourcana or Simply Plural where I describe what maybe my work personality is. And I give some notes like: “OK, I notice I like coffee; I like to talk to people this way; I’m most productive when I do this or that thing”.

Sarah: And then when people using these apps go to a therapeutic session to work with a professional to improve their mental health, or when maybe things are a little tough and they need to check their notes, so to speak, they open these apps and check, “OK, where am I? What was I doing? What context did I have?”, and it can be pretty helpful.

Sammy: When you started building Ourcana, what were the design challenges from a technical and experiential perspective?

Sarah: In March, the Simply Plural website and its developer announced the app will shut down, probably in June or July. The community was in a bit of a panic, and a lot of replacement apps popped up And many of them fell into the same class of problems. For some, there were drama or accusations, saying, “The data on the server is not encrypted. How do we know you’re not reading user data”? Other times, very nefarious apps would say, “Well, we are reading user data, and you’re not allowed to use our app if you do X, Y, Z”.

Sammy: Oh.

Sarah: I thought that that was untenable for privacy. I needed a way to communicate securely, authenticate who I am talking to, know that a message is not fake, as well as a layer of encryption. Before Ourcana, I was working on Fluffy Chat with the matrix.org protocol, which is an end-to-end encrypted (or at least end-to-end encryption capable) chat for online communities that’s self-hosted and federated. And I realized that that kind of technology was probably the direction I wanted to go. But the nature of very fast synchronous chats is not necessarily aligned with document storage and creating journals and fine-grained permission systems.

Sarah: So that was what I was thinking about. How do I do permissions? How do I do authentication? How do I add encryption on top? I need some kind of protocol to wrap it all together. And that’s really what I was thinking about in the development of Ourcana, at least to start.

Sammy: It also sounds like the trauma of Simply Plural shutting down and taking everything with them led to you wanting a solution which would make that situation impossible, or at least provide a fallback.

Sarah: Absolutely. What really struck me was when Simply Plural went down was a lot of people were left asking questions. What happened? Does my data work offline? Can I keep my data? Can I export my data? And the answers were, frankly, “We don’t know. We haven’t tested it”.

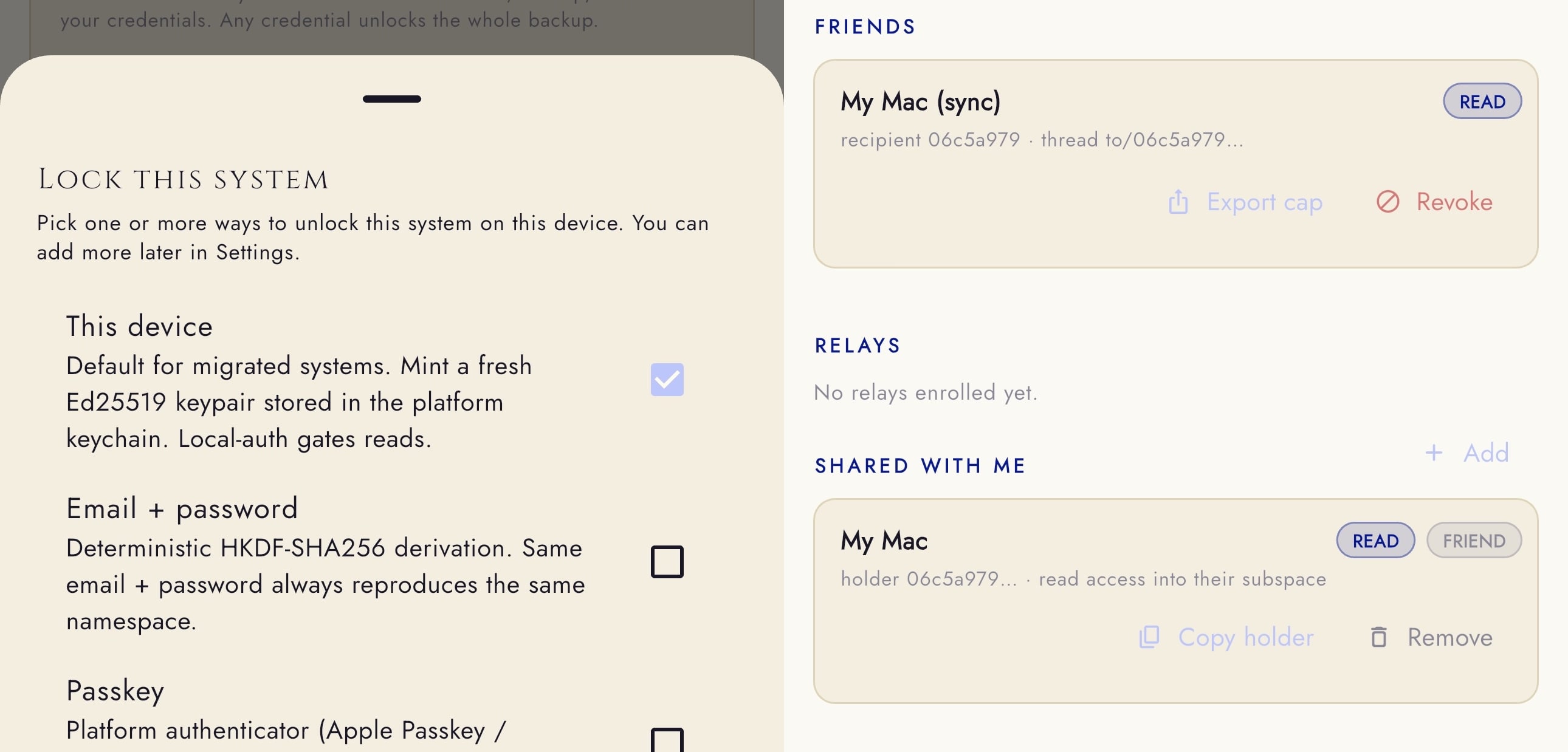

Sarah: So I wanted to create Ourcana with an open source server from the start, and the idea that any client can connect to any number of servers.

Sarah: I think I mentioned [on the worm-blossom Discord server] the idea of dropping a rock in a pond and the data ripples out. To me, that means I change a document, and several external servers eventually get my data through consistency sync. And that means if one or two go down, well, I’ve got my data on the remote servers. I’ve got a copy of the data on my phone.

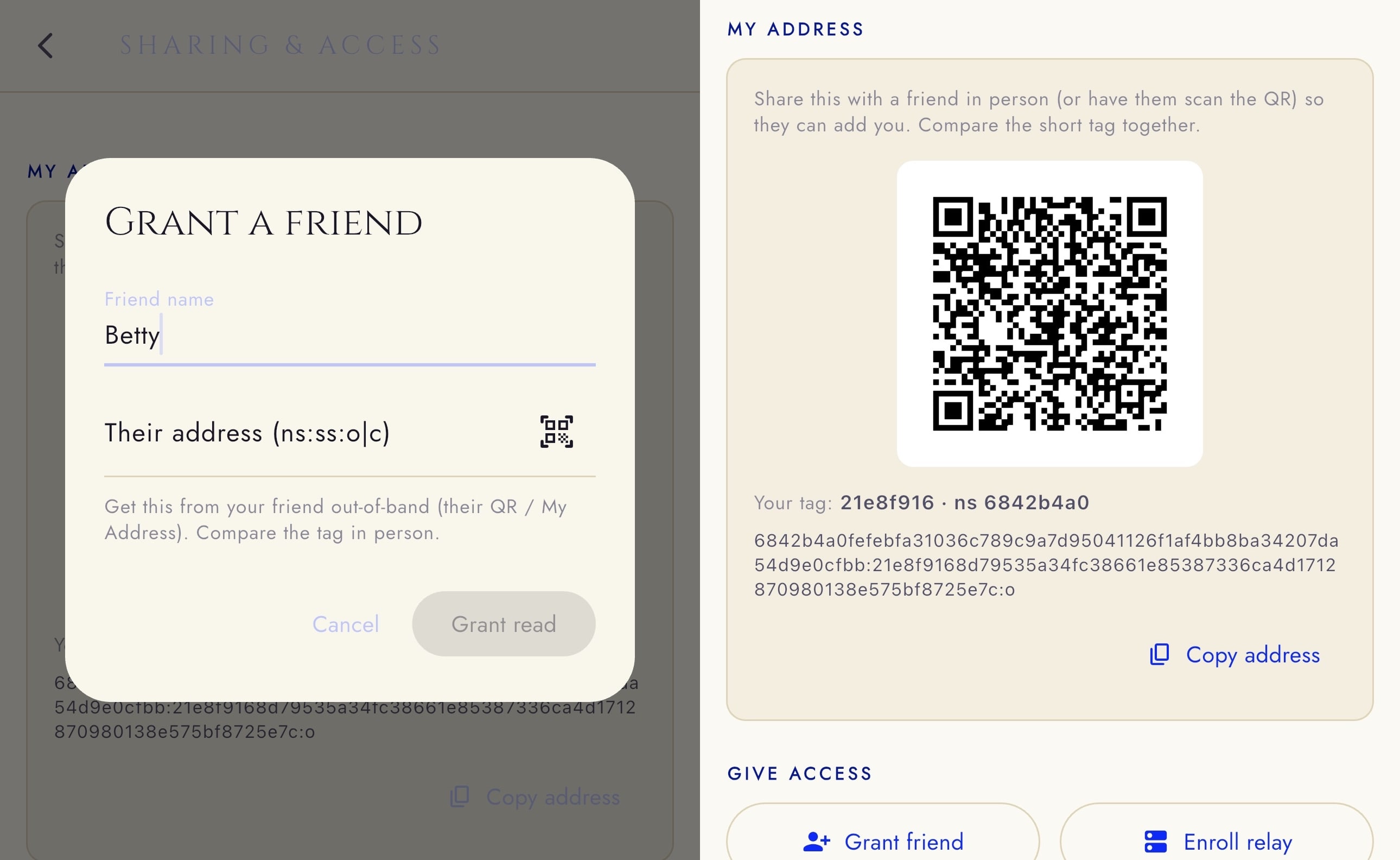

Sarah: And what really interested me about Willow was this idea that I could hold onto data for my friends without being able necessarily to mutate it, tamper with it, and if encrypted, perhaps even read it. I really like the idea that, say, my partner could send me a copy of her data, keep some of it private, and I’d have a backup for my partner on my phone. So no matter what happens — server goes down, her phone explodes — I can say, “here you go”. My number one design concern was ensuring that Ourcana doesn’t crash and burn like Simply Plural does and everyone loses their data and we’re back in the current situation again.

Sammy: Right. You’ve got a kind of communal backup system.

Aljoscha: One of the slogans of Secure Scuttlebutt was “Your friends are the data centre”.

Sarah: Yeah, I saw that. I got that sense from Willow too. This idea that your friends can hold onto things for you. And I thought, wow, that’s really how you do sharding of data. That’s multiple backups, off-site backups, the whole 3-2-1 plan in one go. And then there was the perspective of putting it onto devices with Flutter, and on servers with Rust.

Sammy: Right, Flutter. You’ve written your own implementation of Willow in Dart. How does that relate to our Rust implementation?

Sarah: Rust is compiled to assembly ahead of time. That makes Rust very powerful for bare metal engineering. But it’s not actually all that portable. When I wanted to create Ourcana, I thought about every platform. I thought about iOS, Android, Windows, Mac, Linux, web, and I realized only really Flutter and Dart can do this. Flutter is the SDK, Dart is the underlying language that runs on its virtual machine.

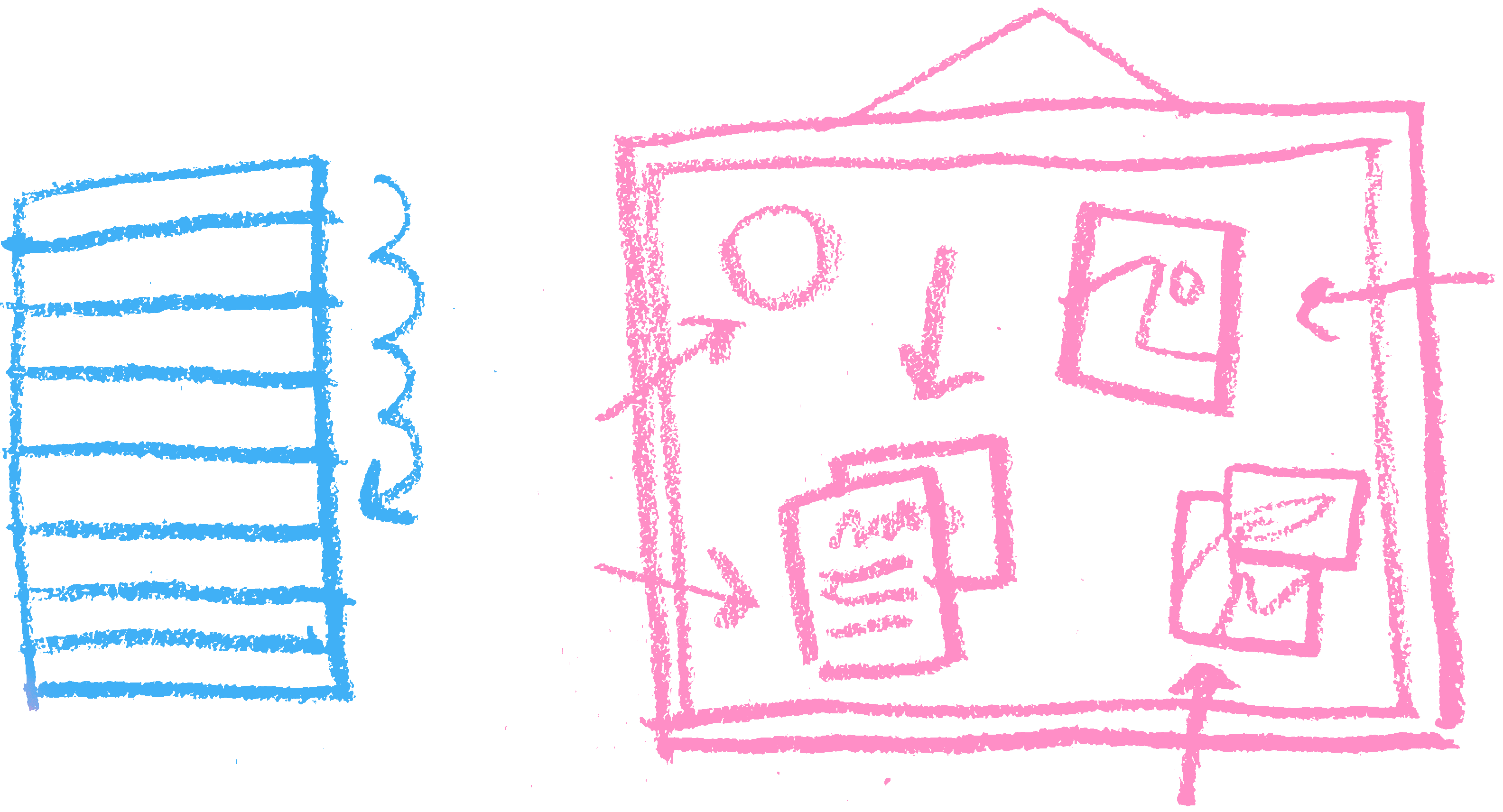

Sarah: Dart, frankly, is very slow. It’s like JavaScript, perhaps even worse. Where it pays off is that it lets you run the same code everywhere in a portable way. And I knew that if I could match the Rust implementation over the wire in a bit-perfect way, I would know that I’d gotten the Dart implementation right.

Sarah: So to me, the Rust code is something that you run on a big node that is always on, always listening maybe. But it’s a service. Flutter and Dart are for clients, clients that wake up, do something, and then you put your phone down and you go about your life. That, to me, is the difference. It’s a trade-off between performance and scalability on the one side, and highly portable client use on the other.

Sarah: And I’ll mention that I plan to release the Willow Dart submodule, the client I have that runs the WTP draft spec, as open source. I want to make sure that’s available to everybody so they can also interop with this cool Rust server I’ve dropped onto the internet.

Sammy: Ourcana is something that people are using already, isn’t it?

Sarah: Yes. I don’t have analytics in the app as a privacy decision. But as far as I can estimate from Discord users and app installs, we’re looking at 9,000 users, maybe even 10,000. I haven’t even counted web. Web is the other big platform, most people just sort of go to ourcana.space, hit Enter, start using it.

Sammy: Fantastic. So, we can actually put the headline “10,000 Willow users!!!” at the top of this interview!

Sarah: Well I’m hoping to get the update with all the Willow stuff out on Friday. I can’t wait to ship something functional to users based on this tech. I’m so excited. I’ve been developing like crazy, just testing, testing, breaking things a lot.

Sammy: Well, I think I can speak for Aljoscha as well when I say that it’s extremely exciting for us to see these designs used in the real world. And not for a weapons platform or something, you know? Actually something nice.

Sarah: Well, I mean, for me, it’s, you know, I would like to securely communicate with my friends and loved ones. And I think that’s what it’s about.

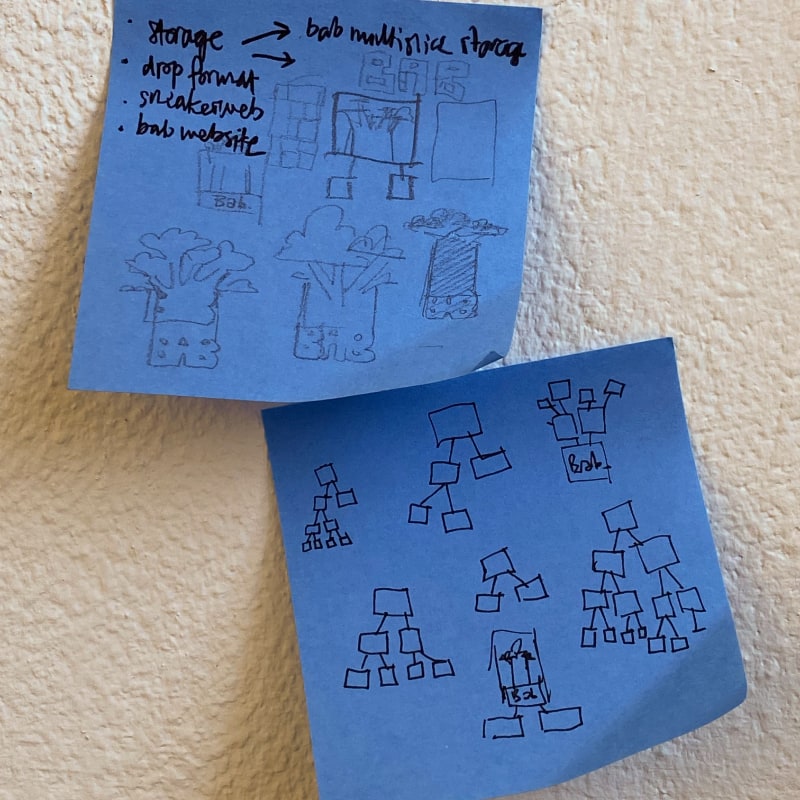

I had quite the productive week! The fuzz test I mentioned last week for exterminating bugs in the Rust Bab implementation? It doesn’t crash anymore. It was almost a bit anticlimactic: I expected right label omission to be the trickiest part, so I left that for the end. Left label omission gave me some trouble first. Once I fixed that, I turned on right label omission in the fuzzer, but everything continued working. Looks like I actually got those parts right immediately. Well, I won’t complain. The new code is released as bab_rs 0.6.0. Next up: implementing a backend that can store multiple non-overlapping subslices of the same string.

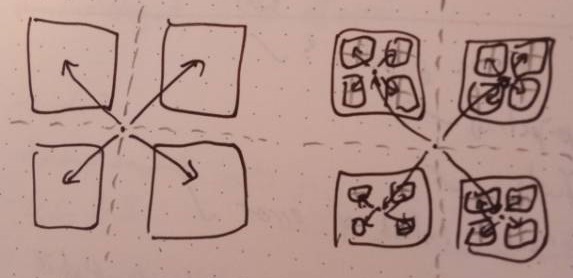

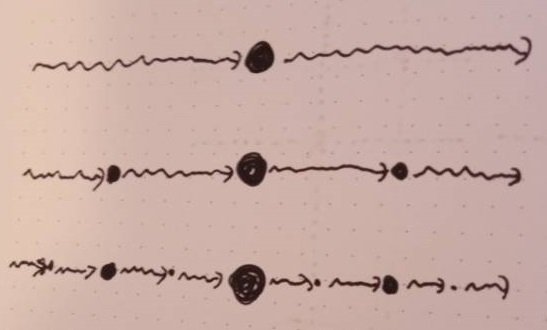

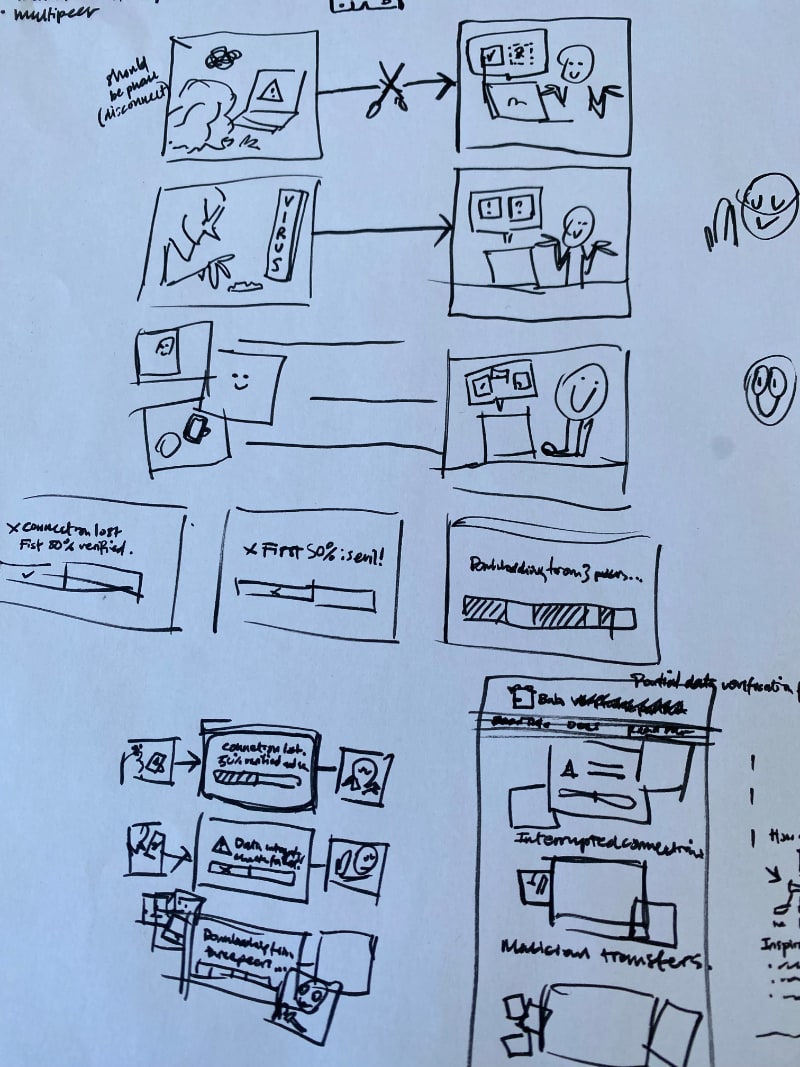

But that is not all: in working on the sneakerweb, we decided to revisit the Willow Drop Format specification. In a nutshell: the old version of the spec would first encode the total number of entries in the drop, followed by the concatenation of the encodings of those drops. Analogously, for each entry, it would first encode the total number of payload slices for that entry, followed by the encodings of these slices. This sounds fairly obvious, but it comes with a problem: when the data store is modified concurrently to creating a drop, the number of entries (or the number of payload subslices of some entry) might be invalidated, resulting in an invalid drop. This makes drop creation fallible, not to mention somewhat more tricky to implement.

So I changed it to a less-upfront-more-streaming-friendly encoding approach where each entry (and each subslice) simply indicates whether it is the final one or not. Basically what I was ranting about for variable length integer encodings, except that here we now chose the less efficient but more flexible approach.

This decision was not easy. Sacrificing efficiency hurts. Not only is an upfront encoding slightly more compact, but also a decoding process can allocate resources more efficiently when it knows these counts in advance. On the other hand, optimisations based on these counts would also be a bit of a footgun, because the counts cannot actually be trusted. A malicious drop might state that it has a large quantity of entries, so that the decoder would allocate vast amounts of resources. So in some sense, the new design prevents brittle implementations.

But the main argument that convinced me to go with the new approach was an observation that Sarah made: if you do not need to specify the entry count upfront, then you can create drops in a completely streaming fashion. Instead of writing a drop to a file, you could write to a TCP connection. You would never set the this-is-the-final-entry flag, so you could always continue appending new entries. Bam, instant one-directional streaming sync for Willow! Sammy immediately coined the term “dripdrop” for this usage of the drop format.

But the main argument that convinced me to go with the new approach was an observation that Sarah made: if you do not need to specify the entry count upfront, then you can create drops in a completely streaming fashion. Instead of writing a drop to a file, you could write to a TCP connection. You would never set the this-is-the-final-entry flag, so you could always continue appending new entries. Bam, instant one-directional streaming sync for Willow! Sammy immediately coined the term “dripdrop” for this usage of the drop format.

I’m particularly interested in this usage because the WTP currently lets the client stream changes to the server. This frankly does not fit well into the pull-based WTP, but I feared that the WTP would have been a lot less useful without clients being able to proactively place their data on a server. Now, this could be achieved by dripdropping instead! So there is a possible future where I get to remove roughly a third of the WTP spec, because dripdropping achieves the same goal. Exciting!

So I ended up tweaking the spec with a specific eye on making dripdropping more efficient. There are some neat changes that effectively allow sending verifiable slice streams for payloads, whereas before drops contained only the bare minimum of Bab verification metadata (because drops are meant to be ingested in one go, not incrementally, after all). You could already include incremental verification metadata in the old format, but it would have been fairly inefficient. The new spec makes this scenario a whole lot more efficient to encode.

The drop format is still very much about working with static data sets in bulk, this hasn’t changed. It just so happens that the new encoding is also convenient and efficient for dripdropping. What a happy coincidence!

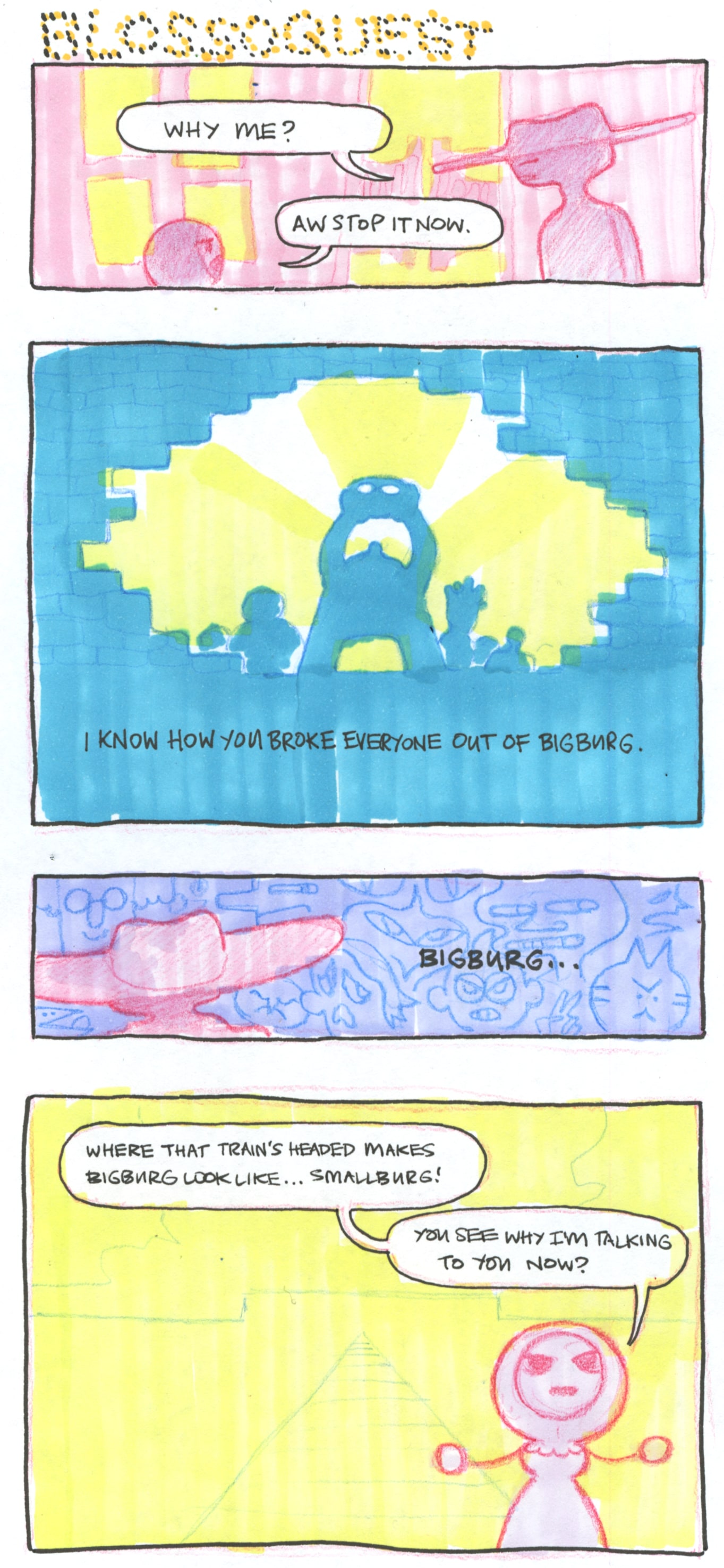

And finally: Sammy, next Blossoquest update when?!

~Aljoscha